|

SHOGUN

4.1.0

|

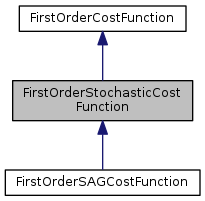

The first order stochastic cost function base class.

The class gives the interface used in first order stochastic minimizers

The cost function must be Written as a finite sample-specific sum of cost. For example, least squares cost function,

\[ f(w)=\frac{ \sum_i{ (y_i-w^T x_i)^2 } }{2} \]

where \((y_i,x_i)\) is the i-th sample, \(y_i\) is the label and \(x_i\) is the features

在文件 FirstOrderStochasticCostFunction.h 第 50 行定义.

Public 成员函数 | |

| virtual void | begin_sample ()=0 |

| virtual bool | next_sample ()=0 |

| virtual SGVector< float64_t > | get_gradient ()=0 |

| virtual float64_t | get_cost ()=0 |

| virtual SGVector< float64_t > | obtain_variable_reference ()=0 |

|

pure virtual |

Initialize to generate a sample sequence

|

pure virtual |

Get the cost given current target variables

For least squares, that is the value of \(f(w)\).

在 FirstOrderSAGCostFunction 内被实现.

Get the SAMPLE gradient value wrt target variables

WARNING This method does return \( \frac{\partial f_i(w) }{\partial w} \), instead of \(\sum_i{ \frac{\partial f_i(w) }{\partial w} }\)

For least squares cost function, that is the value of \(\frac{\partial f_i(w) }{\partial w}\) given \(w\) is known where the index \(i\) is obtained by next_sample()

在 FirstOrderSAGCostFunction 内被实现.

|

pure virtual |

Get next sample

Obtain a reference of target variables Minimizers will modify target variables in place.

This method will be called by FirstOrderMinimizer::minimize()

For least squares, that is \(w\)