As mentioned before SHOGUN interfaces to several programming languages and toolkits such as Matlab(tm), R, Python, Octave. The following sections shall give you an overview over the static interface commands of SHOGUN. For the static interfaces we tried to preserve the syntax of the commands in a consistent manner through all the different languages. However as in some cases this was not possible and we document the subtle differences of syntax and semantic in the respective toolkit. Instead of reading through all this, we suggest to have a look at the large number of examples available in the examples / interface directory. For example examples/R or examples/python etc.

Overview of Static Interfaces & Testing the Installation

Interface Commands

Command Reference

Since octave is nowadays up to par with matlab a single documentation for both interfaces is sufficient and will be based on octave (matlab can be used synonymously).

To start SHOGUN in octave, start octave and check if it is correctly installed by by typing ( let ">" be the octave prompt )

sg('help')

inside of octave. This should show you some help text.

To start SHOGUN in python, start python and check if it is correctly installed by by typing ( let ">" be the python prompt )

from sg import sg

sg('help')

inside of python. This should show you some help text.

To fire up SHOGUN in R make sure that you have SHOGUN correctly installed in R. You can check this by typing ( let ">" be the R prompt ):

> library()

inside of R, this command should list all R packages that have been installed on your system. You should have an entry like:

sg The SHOGUN Machine Learning Toolbox

After you made sure that SHOGUN is installed correctly you can start it via:

> library(sg)

you will see some informations of the SHOGUN core (compile options etc). After this command R and SHOGUN are ready to receive your commands.

In general all commands in SHOGUN are issued using the function sg(...). To invoke the SHOGUN command help one types:

> sg('help')

and then a help text appears giving a short description of all commands.

These functions transfer data from the interface to shogun and back. Suppose you have a matlab matrix or R matrix "features" which contains your training data and you want to register this data, you simply type:

Transfer the features to shogun

sg('set_features', 'TRAIN|TEST', features[, DNABINFILE|<ALPHABET>]) sg('add_features', 'TRAIN|TEST', features[, DNABINFILE|<ALPHABET>]) Features can be char/byte/word/int/real valued matrices, real values sparse matrices, or strings (lists or cell arrays of strings). When dealing with strings an alphabet name has to be specified (DNA, RAW, ...). Use 'TRAIN' to tell SHOGUN that this is the data you want to train your classifier and TEST for the test data.

In contrast to set_features, add_features will create a combined feature object and append the features to it. This is useful when dealing with a set of different features (real valued and strings) and multiple kernels.

In case a single string was set using set_features, it can be "multiplexed" by sliding a window over it using

sg('from_position_list', 'TRAIN|TEST', winsize, shift[, skip]) sg('obtain_from_sliding_window', winsize, skip) Deletes the features which we assigned before in the actual SHOGUN session.

sg('clean_features') Obtain the Features from shogun

[features]=sg('get_features', 'TRAIN|TEST') One proceeds similar when assigning labels to the training data and obtaining labels from shogun: The commands

sg('set_labels', 'TRAIN', trainlab) [labels]=sg('get_labels', 'TRAIN|TEST') tell SHOGUN that the labels of the assigned training data reside in trainlab, respectively return the current labels (note that currently all data is copied into SHOGUN, so modifications to trainlab are local within the interface).

Kernel and DistanceMatrix specific commands, used to create, obtain and setting the kernel matrix.

Creating a kernel in shogun

sg('set_kernel', 'KERNELNAME', 'FEATURETYPE', CACHESIZE, PARAMETERS) sg('add_kernel', WEIGHT, 'KERNELNAME', 'FEATURETYPE', CACHESIZE, PARAMETERS) Here KERNELNAME is the name of the kernel one wishes to use, FEATURETYPE the type of features (e.g. REAL for standard realvalued feature vectors), CACHESIZE the size of the kernel cache in megabytes and PARAMETERS kernel specific additional parameters.

The following kernels are implemented in SHOGUN:

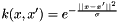

To work with a gaussian kernel on real values one issues:

sg('set_kernel', 'GAUSSIAN', 'TYPE', CACHESIZE, SIGMA)For example:

sg('set_kernel', 'GAUSSIAN', 'REAL', 40, 1)creates a gaussian kernel on real values with a cache size of 40MB and a sigma value of one. Available types for the gaussian kernel: REAL, SPARSEREAL.

A linear kernel is created via:

sg('set_kernel', 'LINEAR', 'TYPE', CACHESIZE)For example:

sg('add_kernel', 1.0, 'LINEAR', 'REAL', 50')creates a linear kernel of cache size 50 for real datavalues, with weight 1.0.

Available types for the linear kernel: BYTE, WORD CHAR, REAL, SPARSEREAL.

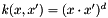

A polynomial kernel is created via:

sg('set_kernel', 'POLY', 'TYPE', CACHESIZE, DEGREE, INHOMOGENE, NORMALIZE) For example:

sg('add_kernel', 0.1, 'POLY', 'REAL', 50, 3, 0) adds a polynomial kernel. Available types for the polynomial kernel: REAL, CHAR, SPARSEREAL.

sg('set_kernel', 'SIGMOID', 'TYPE', CACHESIZE, GAMMA, COEFF)For example:

sg('set_kernel', 'SIGMOID', 'REAL', 40, 0.1, 0.1) creates a sigmoid kernel on real values with a cache size of 40MB, a gamma value of 0.1 and a coefficient of 0.1. Available types for the gaussian kernel: REAL.

Assign a user defined custom kernel, fo which only the upper triangle may be given (DIAG) or the FULL matrix (FULL), or the full matrix which is then internally stored as a upper triangle (FULL2DIAG).

sg('set_custom_kernel', kernelmatrix, 'DIAG|FULL|FULL2DIAG') The purpose of the get_kernel_matrix and get_distance_matrix commands is to return a kernel or distance matrix representing the kernel/distance matrix for the actual problem.

[D]=sg('get_distance_matrix', 'TRAIN|TEST') [K]=sg('get_kernel_matrix', 'TRAIN|TEST') km refers to a matrix object.

The get_svm command returns some properties of an SVM such as the Langrange multipliers alpha, the bias b and the index of the support vectors SV (zero based).

[bias, alphas]=sg('get_svm') sg('set_classifier', bias, alphas) This commands returns a list of arguments. set_classifier may be later on used (after creating an SVM classifier) to set alphas and bias again.

The result of the classification of the test sample is obtained via:

[result]=sg('classify') [result]=sg('classify_example', feature_vector_index) Miscellaneous functions.

Returns the svn version number

sg('get_version') Gives you a help text.

sg('help') sg('help', 'CMD') Sets a debugging log level - useful to trace errors.

sg('loglevel', 'LEVEL') For example

> sg('loglevel', 'ALL')

gives you a list of instructions.

Let's get started, equipped with the above information on the basic SHOGUN commands you are now able to create your own SHOGUN applications.

Let us discuss an example:

sg('set_features', 'TRAIN', traindat) sg('set_labels', 'TRAIN', trainlab) sg('set_kernel', 'GAUSSIAN', 'REAL', 100, 1.0) sg('new_classifier', 'SVMLIGHT') sg('c', 20.0) sg('train_classifier') sg('set_features', 'TEST', testdat) out=sg('classify')