|

SHOGUN

4.1.0

|

|

SHOGUN

4.1.0

|

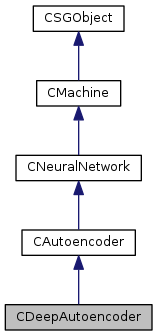

Represents a muti-layer autoencoder.

A deep autoencoder consists of an input layer, multiple encoding layers, and multiple decoding layers. It can be pre-trained as a stack of single layer autoencoders. Fine-tuning can performed on the entire autoencoder in an unsupervised manner using train(), or in a supervised manner using convert_to_neural_network().

This class supports training normal deep autoencoders and denoising autoencoders [Vincent, 2008]. To use denoising autoencoders set noise_type and noise_parameter to specify the type and strength of the noise (pt_noise_type and pt_noise_parameter for pre-training).

NOTE: LBFGS does not work properly with denoising autoencoders due to their stochastic nature. Use gradient descent instead.

Deep contractive autoencoders [Rifai, 2011] are also supported. To use them, call set_contraction_coefficient() (or use pt_contraction_coefficient for pre-training). Denoising can also be used with contractive autoencoders through noise_type and noise_parameter.

Deep convolutional autoencoders [J Masci, 2011] are also supported. Simply build the autoencoder using CNeuralConvolutionalLayer objects.

NOTE: Contractive convolutional autoencoders are not supported.

If the autoencoder has N layers, encoding layers will be the layers following the input layer up to and including layer (N-1)/2. The rest of the layers are called the decoding layers. Note that the number of encoding layers is the same as the number of decoding layers.

The layers of the autoencoder must be symmetric in the number of neurons about the last encoding layer, that is, layer i must have the same number of neurons as layer N-i-1. For example, a valid structure could be something like: 500->250->100->250->500.

When finetuning the autoencoder in a unsupervised manner, denoising and contraction can also be used through set_contraction_coefficient() and noise_type and noise_parameter. See CAutoencoder for more details.

Definition at line 87 of file DeepAutoencoder.h.

Protected Attributes | |

| float64_t | m_sigma |

| float64_t | m_contraction_coefficient |

| int32_t | m_num_inputs |

| int32_t | m_num_layers |

| CDynamicObjectArray * | m_layers |

| SGMatrix< bool > | m_adj_matrix |

| int32_t | m_total_num_parameters |

| SGVector< float64_t > | m_params |

| SGVector< bool > | m_param_regularizable |

| SGVector< int32_t > | m_index_offsets |

| int32_t | m_batch_size |

| bool | m_is_training |

| float64_t | m_max_train_time |

| CLabels * | m_labels |

| ESolverType | m_solver_type |

| bool | m_store_model_features |

| bool | m_data_locked |

| CDeepAutoencoder | ( | ) |

default constructor

Definition at line 47 of file DeepAutoencoder.cpp.

| CDeepAutoencoder | ( | CDynamicObjectArray * | layers, |

| float64_t | sigma = 0.01 |

||

| ) |

Constructs and initializes an autoencoder

| layers | An array of CNeuralLayer objects specifying the layers of the autoencoder |

| sigma | Standard deviation of the gaussian used to initialize the weights |

Definition at line 52 of file DeepAutoencoder.cpp.

|

virtual |

Definition at line 102 of file DeepAutoencoder.h.

apply machine to data if data is not specified apply to the current features

| data | (test)data to be classified |

Definition at line 152 of file Machine.cpp.

|

virtualinherited |

apply machine to data in means of binary classification problem

Reimplemented from CMachine.

Definition at line 158 of file NeuralNetwork.cpp.

|

virtualinherited |

apply machine to data in means of latent problem

Reimplemented in CLinearLatentMachine.

Definition at line 232 of file Machine.cpp.

Applies a locked machine on a set of indices. Error if machine is not locked

| indices | index vector (of locked features) that is predicted |

Definition at line 187 of file Machine.cpp.

|

virtualinherited |

applies a locked machine on a set of indices for binary problems

Reimplemented in CKernelMachine, and CMultitaskLinearMachine.

Definition at line 238 of file Machine.cpp.

|

virtualinherited |

applies a locked machine on a set of indices for latent problems

Definition at line 266 of file Machine.cpp.

|

virtualinherited |

applies a locked machine on a set of indices for multiclass problems

Definition at line 252 of file Machine.cpp.

|

virtualinherited |

applies a locked machine on a set of indices for regression problems

Reimplemented in CKernelMachine.

Definition at line 245 of file Machine.cpp.

|

virtualinherited |

applies a locked machine on a set of indices for structured problems

Definition at line 259 of file Machine.cpp.

|

virtualinherited |

apply machine to data in means of multiclass classification problem

Reimplemented from CMachine.

Definition at line 199 of file NeuralNetwork.cpp.

|

virtualinherited |

applies to one vector

Reimplemented in CKernelMachine, CRelaxedTree, CWDSVMOcas, COnlineLinearMachine, CLinearMachine, CMultitaskLinearMachine, CMulticlassMachine, CKNN, CDistanceMachine, CMultitaskLogisticRegression, CMultitaskLeastSquaresRegression, CScatterSVM, CGaussianNaiveBayes, CPluginEstimate, and CFeatureBlockLogisticRegression.

|

virtualinherited |

apply machine to data in means of regression problem

Reimplemented from CMachine.

Definition at line 187 of file NeuralNetwork.cpp.

|

virtualinherited |

apply machine to data in means of SO classification problem

Reimplemented in CLinearStructuredOutputMachine.

Definition at line 226 of file Machine.cpp.

|

inherited |

Builds a dictionary of all parameters in SGObject as well of those of SGObjects that are parameters of this object. Dictionary maps parameters to the objects that own them.

| dict | dictionary of parameters to be built. |

Definition at line 597 of file SGObject.cpp.

|

virtualinherited |

Checks if the gradients computed using backpropagation are correct by comparing them with gradients computed using numerical approximation. Used for testing purposes only.

Gradients are numerically approximated according to:

\[ c = max(\epsilon x, s) \]

\[ f'(x) = \frac{f(x + c)-f(x - c)}{2c} \]

| approx_epsilon | Constant used during gradient approximation |

| s | Some small value, used to prevent division by zero |

Definition at line 554 of file NeuralNetwork.cpp.

|

virtualinherited |

Creates a clone of the current object. This is done via recursively traversing all parameters, which corresponds to a deep copy. Calling equals on the cloned object always returns true although none of the memory of both objects overlaps.

Definition at line 714 of file SGObject.cpp.

Computes the error between the output layer's activations and the given target activations.

| targets | desired values for the network's output, matrix of size num_neurons_output_layer*batch_size |

Reimplemented from CAutoencoder.

Definition at line 201 of file DeepAutoencoder.cpp.

|

protectedvirtualinherited |

Forward propagates the inputs and computes the error between the output layer's activations and the given target activations.

| inputs | inputs to the network, a matrix of size m_num_inputs*m_batch_size |

| targets | desired values for the network's output, matrix of size num_neurons_output_layer*batch_size |

Definition at line 546 of file NeuralNetwork.cpp.

|

protectedvirtualinherited |

Applies backpropagation to compute the gradients of the error with repsect to every parameter in the network.

| inputs | inputs to the network, a matrix of size m_num_inputs*m_batch_size |

| targets | desired values for the output layer's activations. matrix of size m_layers[m_num_layers-1].get_num_neurons()*m_batch_size |

| gradients | array to be filled with gradient values. |

Definition at line 467 of file NeuralNetwork.cpp.

|

virtualinherited |

Connects layer i as input to layer j. In order for forward and backpropagation to work correctly, i must be less that j

Definition at line 75 of file NeuralNetwork.cpp.

|

virtual |

Converts the autoencoder into a neural network for supervised finetuning.

The neural network is formed using the input layer and the encoding layers. If specified, another output layer will added on top of those layers

| output_layer | If specified, this layer will be added on top of the last encoding layer |

| sigma | Standard deviation used to initialize the parameters of the output layer |

Definition at line 171 of file DeepAutoencoder.cpp.

Locks the machine on given labels and data. After this call, only train_locked and apply_locked may be called

Only possible if supports_locking() returns true

| labs | labels used for locking |

| features | features used for locking |

Reimplemented in CKernelMachine.

Definition at line 112 of file Machine.cpp.

|

virtualinherited |

Unlocks a locked machine and restores previous state

Reimplemented in CKernelMachine.

Definition at line 143 of file Machine.cpp.

|

virtualinherited |

A deep copy. All the instance variables will also be copied.

Definition at line 198 of file SGObject.cpp.

|

virtualinherited |

Disconnects layer i from layer j

Definition at line 88 of file NeuralNetwork.cpp.

|

virtualinherited |

Removes all connections in the network

Definition at line 93 of file NeuralNetwork.cpp.

Recursively compares the current SGObject to another one. Compares all registered numerical parameters, recursion upon complex (SGObject) parameters. Does not compare pointers!

May be overwritten but please do with care! Should not be necessary in most cases.

| other | object to compare with |

| accuracy | accuracy to use for comparison (optional) |

| tolerant | allows linient check on float equality (within accuracy) |

Definition at line 618 of file SGObject.cpp.

Ensures the given features are suitable for use with the network and returns their feature matrix

Definition at line 614 of file NeuralNetwork.cpp.

|

protectedvirtualinherited |

Applies forward propagation, computes the activations of each layer up to layer j

| data | input features |

| j | layer index at which the propagation should stop. If -1, the propagation continues up to the last layer |

Definition at line 439 of file NeuralNetwork.cpp.

|

protectedvirtualinherited |

Applies forward propagation, computes the activations of each layer up to layer j

| inputs | inputs to the network, a matrix of size m_num_inputs*m_batch_size |

| j | layer index at which the propagation should stop. If -1, the propagation continues up to the last layer |

Definition at line 446 of file NeuralNetwork.cpp.

|

virtualinherited |

get classifier type

Reimplemented from CMachine.

Definition at line 188 of file NeuralNetwork.h.

|

inherited |

|

inherited |

|

inherited |

|

virtualinherited |

|

protectedinherited |

returns a pointer to layer i in the network

Definition at line 723 of file NeuralNetwork.cpp.

returns a copy of a layer's parameters array

| i | index of the layer |

Definition at line 712 of file NeuralNetwork.cpp.

|

inherited |

Returns an array holding the network's layers

Definition at line 744 of file NeuralNetwork.cpp.

|

virtualinherited |

returns type of problem machine solves

Reimplemented from CMachine.

Definition at line 675 of file NeuralNetwork.cpp.

|

inherited |

|

inherited |

Definition at line 498 of file SGObject.cpp.

|

inherited |

Returns description of a given parameter string, if it exists. SG_ERROR otherwise

| param_name | name of the parameter |

Definition at line 522 of file SGObject.cpp.

|

inherited |

Returns index of model selection parameter with provided index

| param_name | name of model selection parameter |

Definition at line 535 of file SGObject.cpp.

|

virtual |

Returns the name of the SGSerializable instance. It MUST BE the CLASS NAME without the prefixed `C'.

Reimplemented from CAutoencoder.

Definition at line 172 of file DeepAutoencoder.h.

|

inherited |

returns the number of inputs the network takes

Definition at line 224 of file NeuralNetwork.h.

|

inherited |

returns the number of neurons in the output layer

Definition at line 739 of file NeuralNetwork.cpp.

|

inherited |

returns the totat number of parameters in the network

Definition at line 218 of file NeuralNetwork.h.

return the network's parameter array

Definition at line 221 of file NeuralNetwork.h.

|

inherited |

|

virtualinherited |

Initializes the network

| sigma | standard deviation of the gaussian used to randomly initialize the parameters |

Definition at line 98 of file NeuralNetwork.cpp.

|

inherited |

|

virtualinherited |

If the SGSerializable is a class template then TRUE will be returned and GENERIC is set to the type of the generic.

| generic | set to the type of the generic if returning TRUE |

Definition at line 296 of file SGObject.cpp.

|

protectedvirtualinherited |

check whether the labels is valid.

Subclasses can override this to implement their check of label types.

| lab | the labels being checked, guaranteed to be non-NULL |

Reimplemented from CMachine.

Definition at line 689 of file NeuralNetwork.cpp.

converts the given labels into a matrix suitable for use with network

Definition at line 630 of file NeuralNetwork.cpp.

|

virtualinherited |

Load this object from file. If it will fail (returning FALSE) then this object will contain inconsistent data and should not be used!

| file | where to load from |

| prefix | prefix for members |

Definition at line 369 of file SGObject.cpp.

|

protectedvirtualinherited | |||||||||||||

Can (optionally) be overridden to post-initialize some member variables which are not PARAMETER::ADD'ed. Make sure that at first the overridden method BASE_CLASS::LOAD_SERIALIZABLE_POST is called.

| ShogunException | will be thrown if an error occurs. |

Reimplemented in CKernel, CWeightedDegreePositionStringKernel, CList, CAlphabet, CLinearHMM, CGaussianKernel, CInverseMultiQuadricKernel, CCircularKernel, and CExponentialKernel.

Definition at line 426 of file SGObject.cpp.

|

protectedvirtualinherited | |||||||||||||

Can (optionally) be overridden to pre-initialize some member variables which are not PARAMETER::ADD'ed. Make sure that at first the overridden method BASE_CLASS::LOAD_SERIALIZABLE_PRE is called.

| ShogunException | will be thrown if an error occurs. |

Reimplemented in CDynamicArray< T >, CDynamicArray< float64_t >, CDynamicArray< float32_t >, CDynamicArray< int32_t >, CDynamicArray< char >, CDynamicArray< bool >, and CDynamicObjectArray.

Definition at line 421 of file SGObject.cpp.

|

virtualinherited |

Definition at line 262 of file SGObject.cpp.

|

virtual |

Pre-trains the deep autoencoder as a stack of autoencoders

If the deep autoencoder has N layers, it is treated as a stack of (N-1)/2 single layer autoencoders. For all \( 1<i<(N-1)/2 \) an autoencoder is formed using layer i-1 as an input layer, layer i as encoding layer, and layer N-i as decoding layer.

For example, if the deep autoencoder has layers L0->L1->L2->L3->L4, two autoencoders will be formed: L0->L1->L4 and L1->L2->L3.

Training parameters for each autoencoder can be set using the pt_* public fields, i.e pt_optimization_method and pt_contraction_coefficient. Each of those fields is a vector of length (N-1)/2, where the first element sets the parameter for the first autoencoder, the second element set the parameter for the second autoencoder and so on. When required, the parameter can be set for all autoencoders using the SGVector::set_const() method.

| data | Training examples |

Definition at line 80 of file DeepAutoencoder.cpp.

|

inherited |

prints all parameter registered for model selection and their type

Definition at line 474 of file SGObject.cpp.

|

virtualinherited |

prints registered parameters out

| prefix | prefix for members |

Definition at line 308 of file SGObject.cpp.

|

virtualinherited |

Connects each layer to the layer after it. That is, connects layer i to as input to layer i+1 for all i.

Definition at line 81 of file NeuralNetwork.cpp.

|

virtual |

Forward propagates the data through the autoencoder and returns the activations of the last layer

| data | Input features |

Reimplemented from CAutoencoder.

Definition at line 164 of file DeepAutoencoder.cpp.

|

virtualinherited |

Save this object to file.

| file | where to save the object; will be closed during returning if PREFIX is an empty string. |

| prefix | prefix for members |

Definition at line 314 of file SGObject.cpp.

|

protectedvirtualinherited | |||||||||||||

Can (optionally) be overridden to post-initialize some member variables which are not PARAMETER::ADD'ed. Make sure that at first the overridden method BASE_CLASS::SAVE_SERIALIZABLE_POST is called.

| ShogunException | will be thrown if an error occurs. |

Reimplemented in CKernel.

Definition at line 436 of file SGObject.cpp.

|

protectedvirtualinherited | |||||||||||||

Can (optionally) be overridden to pre-initialize some member variables which are not PARAMETER::ADD'ed. Make sure that at first the overridden method BASE_CLASS::SAVE_SERIALIZABLE_PRE is called.

| ShogunException | will be thrown if an error occurs. |

Reimplemented in CKernel, CDynamicArray< T >, CDynamicArray< float64_t >, CDynamicArray< float32_t >, CDynamicArray< int32_t >, CDynamicArray< char >, CDynamicArray< bool >, and CDynamicObjectArray.

Definition at line 431 of file SGObject.cpp.

|

protectedvirtualinherited |

Sets the batch size (the number of train/test cases) the network is expected to deal with. Allocates memory for the activations, local gradients, input gradients if necessary (if the batch size is different from it's previous value)

| batch_size | number of train/test cases the network is expected to deal with. |

Definition at line 604 of file NeuralNetwork.cpp.

|

virtual |

Sets the contraction coefficient

For contractive autoencoders [Rifai, 2011], a term:

\[ \frac{\lambda}{N} \sum_{k=0}^{N-1} \left \| J(x_k) \right \|^2_F \]

is added to the error, where \( \left \| J(x_k)) \right \|^2_F \) is the Frobenius norm of the Jacobian of the activations of the each encoding layer with respect to its inputs, \( N \) is the batch size, and \( \lambda \) is the contraction coefficient.

| coeff | Contraction coefficient |

Reimplemented from CAutoencoder.

Definition at line 214 of file DeepAutoencoder.cpp.

|

inherited |

Definition at line 41 of file SGObject.cpp.

|

inherited |

Definition at line 46 of file SGObject.cpp.

|

inherited |

Definition at line 51 of file SGObject.cpp.

|

inherited |

Definition at line 56 of file SGObject.cpp.

|

inherited |

Definition at line 61 of file SGObject.cpp.

|

inherited |

Definition at line 66 of file SGObject.cpp.

|

inherited |

Definition at line 71 of file SGObject.cpp.

|

inherited |

Definition at line 76 of file SGObject.cpp.

|

inherited |

Definition at line 81 of file SGObject.cpp.

|

inherited |

Definition at line 86 of file SGObject.cpp.

|

inherited |

Definition at line 91 of file SGObject.cpp.

|

inherited |

Definition at line 96 of file SGObject.cpp.

|

inherited |

Definition at line 101 of file SGObject.cpp.

|

inherited |

Definition at line 106 of file SGObject.cpp.

|

inherited |

Definition at line 111 of file SGObject.cpp.

|

inherited |

set generic type to T

|

inherited |

|

inherited |

set the parallel object

| parallel | parallel object to use |

Definition at line 241 of file SGObject.cpp.

|

inherited |

set the version object

| version | version object to use |

Definition at line 283 of file SGObject.cpp.

|

virtualinherited |

set labels

| lab | labels |

Reimplemented from CMachine.

Definition at line 696 of file NeuralNetwork.cpp.

|

virtualinherited |

Sets the layers of the network

| layers | An array of CNeuralLayer objects specifying the layers of the network. Must contain at least one input layer. The last layer in the array is treated as the output layer |

Definition at line 55 of file NeuralNetwork.cpp.

|

inherited |

set maximum training time

| t | maximimum training time |

Definition at line 82 of file Machine.cpp.

|

inherited |

|

virtualinherited |

Setter for store-model-features-after-training flag

| store_model | whether model should be stored after training |

Definition at line 107 of file Machine.cpp.

|

virtualinherited |

A shallow copy. All the SGObject instance variables will be simply assigned and SG_REF-ed.

Reimplemented in CGaussianKernel.

Definition at line 192 of file SGObject.cpp.

|

protectedvirtualinherited |

Stores feature data of underlying model. After this method has been called, it is possible to change the machine's feature data and call apply(), which is then performed on the training feature data that is part of the machine's model.

Base method, has to be implemented in order to allow cross-validation and model selection.

NOT IMPLEMENTED! Has to be done in subclasses

Reimplemented in CKernelMachine, CKNN, CLinearMulticlassMachine, CTreeMachine< T >, CTreeMachine< ConditionalProbabilityTreeNodeData >, CTreeMachine< RelaxedTreeNodeData >, CTreeMachine< id3TreeNodeData >, CTreeMachine< VwConditionalProbabilityTreeNodeData >, CTreeMachine< CARTreeNodeData >, CTreeMachine< C45TreeNodeData >, CTreeMachine< CHAIDTreeNodeData >, CTreeMachine< NbodyTreeNodeData >, CLinearMachine, CGaussianProcessMachine, CHierarchical, CDistanceMachine, CKernelMulticlassMachine, and CLinearStructuredOutputMachine.

|

virtualinherited |

Reimplemented in CKernelMachine, and CMultitaskLinearMachine.

|

virtualinherited |

Trains the autoencoder

| data | Training examples |

Reimplemented from CMachine.

Definition at line 96 of file Autoencoder.cpp.

|

protectedvirtualinherited |

trains the network using gradient descent

Definition at line 261 of file NeuralNetwork.cpp.

|

protectedvirtualinherited |

trains the network using L-BFGS

Definition at line 357 of file NeuralNetwork.cpp.

Trains a locked machine on a set of indices. Error if machine is not locked

NOT IMPLEMENTED

| indices | index vector (of locked features) that is used for training |

Reimplemented in CKernelMachine, and CMultitaskLinearMachine.

|

protectedvirtualinherited |

|

protectedvirtualinherited |

returns whether machine require labels for training

Reimplemented in COnlineLinearMachine, CHierarchical, CLinearLatentMachine, CVwConditionalProbabilityTree, CConditionalProbabilityTree, and CLibSVMOneClass.

|

virtual |

Forward propagates the data through the autoencoder and returns the activations of the last encoding layer (layer (N-1)/2)

| data | Input features |

Reimplemented from CAutoencoder.

Definition at line 157 of file DeepAutoencoder.cpp.

|

inherited |

unset generic type

this has to be called in classes specializing a template class

Definition at line 303 of file SGObject.cpp.

|

virtualinherited |

Updates the hash of current parameter combination

Definition at line 248 of file SGObject.cpp.

|

inherited |

Probabilty that a hidden layer neuron will be dropped out When using this, the recommended value is 0.5

default value 0.0 (no dropout)

For more details on dropout, see paper [Hinton, 2012]

Definition at line 375 of file NeuralNetwork.h.

|

inherited |

Probabilty that a input layer neuron will be dropped out When using this, a good value might be 0.2

default value 0.0 (no dropout)

For more details on dropout, see this paper [Hinton, 2012]

Definition at line 385 of file NeuralNetwork.h.

|

inherited |

convergence criteria training stops when (E'- E)/E < epsilon where E is the error at the current iterations and E' is the error at the previous iteration default value is 1.0e-5

Definition at line 400 of file NeuralNetwork.h.

|

inherited |

Used to damp the error fluctuations when stochastic gradient descent is used. damping is done according to: error_damped(i) = c*error(i) + (1-c)*error_damped(i-1) where c is the damping coefficient

If -1, the damping coefficient is automatically computed according to: c = 0.99*gd_mini_batch_size/training_set_size + 1e-2;

default value is -1

Definition at line 444 of file NeuralNetwork.h.

|

inherited |

gradient descent learning rate, defualt value 0.1

Definition at line 415 of file NeuralNetwork.h.

|

inherited |

gradient descent learning rate decay learning rate is updated at each iteration i according to: alpha(i)=decay*alpha(i-1) default value is 1.0 (no decay)

Definition at line 422 of file NeuralNetwork.h.

|

inherited |

size of the mini-batch used during gradient descent training, if 0 full-batch training is performed default value is 0

Definition at line 412 of file NeuralNetwork.h.

|

inherited |

gradient descent momentum multiplier

default value is 0.9

For more details on momentum, see this paper [Sutskever, 2013]

Definition at line 432 of file NeuralNetwork.h.

|

inherited |

io

Definition at line 369 of file SGObject.h.

|

inherited |

L1 Regularization coeff, default value is 0.0

Definition at line 365 of file NeuralNetwork.h.

|

inherited |

L2 Regularization coeff, default value is 0.0

Definition at line 362 of file NeuralNetwork.h.

|

protectedinherited |

Describes the connections in the network: if there's a connection from layer i to layer j then m_adj_matrix(i,j) = 1.

Definition at line 458 of file NeuralNetwork.h.

|

protectedinherited |

number of train/test cases the network is expected to deal with. Default value is 1

Definition at line 480 of file NeuralNetwork.h.

|

protectedinherited |

For contractive autoencoders [Rifai, 2011], a term:

\[ \frac{\lambda}{N} \sum_{k=0}^{N-1} \left \| J(x_k) \right \|^2_F \]

is added to the error, where \( \left \| J(x_k)) \right \|^2_F \) is the Frobenius norm of the Jacobian of the activations of the hidden layer with respect to its inputs, \( N \) is the batch size, and \( \lambda \) is the contraction coefficient.

Default value is 0.0.

Definition at line 210 of file Autoencoder.h.

|

protectedinherited |

|

inherited |

parameters wrt which we can compute gradients

Definition at line 384 of file SGObject.h.

|

inherited |

Hash of parameter values

Definition at line 387 of file SGObject.h.

|

protectedinherited |

offsets specifying where each layer's parameters and parameter gradients are stored, i.e layer i's parameters are stored at m_params + m_index_offsets[i]

Definition at line 475 of file NeuralNetwork.h.

|

protectedinherited |

True if the network is currently being trained initial value is false

Definition at line 485 of file NeuralNetwork.h.

|

protectedinherited |

network's layers

Definition at line 453 of file NeuralNetwork.h.

|

protectedinherited |

|

inherited |

model selection parameters

Definition at line 381 of file SGObject.h.

|

protectedinherited |

number of neurons in the input layer

Definition at line 447 of file NeuralNetwork.h.

|

protectedinherited |

number of layer

Definition at line 450 of file NeuralNetwork.h.

|

protectedinherited |

Array that specifies which parameters are to be regularized. This is used to turn off regularization for bias parameters

Definition at line 469 of file NeuralNetwork.h.

|

inherited |

parameters

Definition at line 378 of file SGObject.h.

array where all the parameters of the network are stored

Definition at line 464 of file NeuralNetwork.h.

|

protected |

Standard deviation of the gaussian used to initialize the parameters

Definition at line 260 of file DeepAutoencoder.h.

|

protectedinherited |

|

protectedinherited |

|

protectedinherited |

total number of parameters in the network

Definition at line 461 of file NeuralNetwork.h.

|

inherited |

Maximum allowable L2 norm for a neurons weights When using this, a good value might be 15

default value -1 (max-norm regularization disabled)

Definition at line 392 of file NeuralNetwork.h.

|

inherited |

maximum number of iterations over the training set. If 0, training will continue until convergence. defualt value is 0

Definition at line 406 of file NeuralNetwork.h.

|

inherited |

Controls the strength of the noise, depending on noise_type

Definition at line 198 of file Autoencoder.h.

|

inherited |

Noise type for denoising autoencoders.

If set to AENT_DROPOUT, inputs are randomly set to zero during each iteration of training with probability noise_parameter.

If set to AENT_GAUSSIAN, gaussian noise with zero mean and noise_parameter standard deviation is added to the inputs.

Default value is AENT_NONE

Definition at line 195 of file Autoencoder.h.

|

inherited |

Optimization method, default is NNOM_LBFGS

Definition at line 359 of file NeuralNetwork.h.

|

inherited |

parallel

Definition at line 372 of file SGObject.h.

Contraction coefficient (see CAutoencoder::set_contraction_coefficient()) for pre-training each encoding layer Default value is 0.0 for all layers

Definition at line 205 of file DeepAutoencoder.h.

CAutoencoder::epsilon for pre-training each encoding layer Default value is 1.0e-5 for all layers

Definition at line 225 of file DeepAutoencoder.h.

CAutoencoder::gd_error_damping_coeff for pre-training each encoding layer Default value is -1 for all layers

Definition at line 255 of file DeepAutoencoder.h.

CAutoencoder::gd_learning_rate for pre-training each encoding layer Default value is 0.1 for all layers

Definition at line 240 of file DeepAutoencoder.h.

CAutoencoder::gd_learning_rate_decay for pre-training each encoding layer Default value is 1.0 for all layers

Definition at line 245 of file DeepAutoencoder.h.

| SGVector<int32_t> pt_gd_mini_batch_size |

CAutoencoder::gd_mini_batch_size for pre-training each encoding layer Default value is 0 for all layers

Definition at line 235 of file DeepAutoencoder.h.

CAutoencoder::gd_momentum for pre-training each encoding layer Default value is 0.9 for all layers

Definition at line 250 of file DeepAutoencoder.h.

CAutoencoder::l1_coefficient for pre-training each encoding layer Default value is 0.0 for all layers

Definition at line 220 of file DeepAutoencoder.h.

CAutoencoder::l2_coefficient for pre-training each encoding layer Default value is 0.0 for all layers

Definition at line 215 of file DeepAutoencoder.h.

| SGVector<int32_t> pt_max_num_epochs |

CAutoencoder::max_num_epochs for pre-training each encoding layer Default value is 0 for all layers

Definition at line 230 of file DeepAutoencoder.h.

CAutoencoder::noise_parameter for pre-training each encoding layer Default value is 0.0 for all layers

Definition at line 199 of file DeepAutoencoder.h.

| SGVector<int32_t> pt_noise_type |

CAutoencoder::noise_type for pre-training each encoding layer Default value is AENT_NONE for all layers

Definition at line 194 of file DeepAutoencoder.h.

| SGVector<int32_t> pt_optimization_method |

CAutoencoder::optimization_method for pre-training each encoding layer Default value is NNOM_LBFGS for all layers

Definition at line 210 of file DeepAutoencoder.h.

|

inherited |

version

Definition at line 375 of file SGObject.h.