|

SHOGUN

4.1.0

|

|

SHOGUN

4.1.0

|

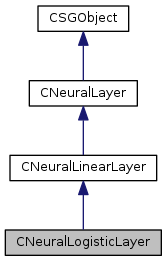

Neural layer with linear neurons, with a logistic activation function. can be used as a hidden layer or an output layer.

When used as an output layer, a squared error measure is used

Definition at line 49 of file NeuralLogisticLayer.h.

Public Member Functions | |

| CNeuralLogisticLayer () | |

| CNeuralLogisticLayer (int32_t num_neurons) | |

| virtual | ~CNeuralLogisticLayer () |

| virtual void | compute_activations (SGVector< float64_t > parameters, CDynamicObjectArray *layers) |

| virtual float64_t | compute_contraction_term (SGVector< float64_t > parameters) |

| virtual void | compute_contraction_term_gradients (SGVector< float64_t > parameters, SGVector< float64_t > gradients) |

| virtual void | compute_local_gradients (SGMatrix< float64_t > targets) |

| virtual const char * | get_name () const |

| virtual void | initialize_neural_layer (CDynamicObjectArray *layers, SGVector< int32_t > input_indices) |

| virtual void | initialize_parameters (SGVector< float64_t > parameters, SGVector< bool > parameter_regularizable, float64_t sigma) |

| virtual void | compute_activations (SGMatrix< float64_t > inputs) |

| virtual void | compute_gradients (SGVector< float64_t > parameters, SGMatrix< float64_t > targets, CDynamicObjectArray *layers, SGVector< float64_t > parameter_gradients) |

| virtual float64_t | compute_error (SGMatrix< float64_t > targets) |

| virtual void | enforce_max_norm (SGVector< float64_t > parameters, float64_t max_norm) |

| virtual void | set_batch_size (int32_t batch_size) |

| virtual bool | is_input () |

| virtual void | dropout_activations () |

| virtual int32_t | get_num_neurons () |

| virtual int32_t | get_width () |

| virtual int32_t | get_height () |

| virtual void | set_num_neurons (int32_t num_neurons) |

| virtual int32_t | get_num_parameters () |

| virtual SGMatrix< float64_t > | get_activations () |

| virtual SGMatrix< float64_t > | get_activation_gradients () |

| virtual SGMatrix< float64_t > | get_local_gradients () |

| virtual SGVector< int32_t > | get_input_indices () |

| virtual CSGObject * | shallow_copy () const |

| virtual CSGObject * | deep_copy () const |

| virtual bool | is_generic (EPrimitiveType *generic) const |

| template<class T > | |

| void | set_generic () |

| template<> | |

| void | set_generic () |

| template<> | |

| void | set_generic () |

| template<> | |

| void | set_generic () |

| template<> | |

| void | set_generic () |

| template<> | |

| void | set_generic () |

| template<> | |

| void | set_generic () |

| template<> | |

| void | set_generic () |

| template<> | |

| void | set_generic () |

| template<> | |

| void | set_generic () |

| template<> | |

| void | set_generic () |

| template<> | |

| void | set_generic () |

| template<> | |

| void | set_generic () |

| template<> | |

| void | set_generic () |

| template<> | |

| void | set_generic () |

| template<> | |

| void | set_generic () |

| void | unset_generic () |

| virtual void | print_serializable (const char *prefix="") |

| virtual bool | save_serializable (CSerializableFile *file, const char *prefix="") |

| virtual bool | load_serializable (CSerializableFile *file, const char *prefix="") |

| void | set_global_io (SGIO *io) |

| SGIO * | get_global_io () |

| void | set_global_parallel (Parallel *parallel) |

| Parallel * | get_global_parallel () |

| void | set_global_version (Version *version) |

| Version * | get_global_version () |

| SGStringList< char > | get_modelsel_names () |

| void | print_modsel_params () |

| char * | get_modsel_param_descr (const char *param_name) |

| index_t | get_modsel_param_index (const char *param_name) |

| void | build_gradient_parameter_dictionary (CMap< TParameter *, CSGObject * > *dict) |

| virtual void | update_parameter_hash () |

| virtual bool | parameter_hash_changed () |

| virtual bool | equals (CSGObject *other, float64_t accuracy=0.0, bool tolerant=false) |

| virtual CSGObject * | clone () |

Public Attributes | |

| bool | is_training |

| float64_t | dropout_prop |

| float64_t | contraction_coefficient |

| ENLAutoencoderPosition | autoencoder_position |

| SGIO * | io |

| Parallel * | parallel |

| Version * | version |

| Parameter * | m_parameters |

| Parameter * | m_model_selection_parameters |

| Parameter * | m_gradient_parameters |

| uint32_t | m_hash |

Protected Member Functions | |

| virtual void | load_serializable_pre () throw (ShogunException) |

| virtual void | load_serializable_post () throw (ShogunException) |

| virtual void | save_serializable_pre () throw (ShogunException) |

| virtual void | save_serializable_post () throw (ShogunException) |

Protected Attributes | |

| int32_t | m_num_neurons |

| int32_t | m_width |

| int32_t | m_height |

| int32_t | m_num_parameters |

| SGVector< int32_t > | m_input_indices |

| SGVector< int32_t > | m_input_sizes |

| int32_t | m_batch_size |

| SGMatrix< float64_t > | m_activations |

| SGMatrix< float64_t > | m_activation_gradients |

| SGMatrix< float64_t > | m_local_gradients |

| SGMatrix< bool > | m_dropout_mask |

default constructor

Definition at line 40 of file NeuralLogisticLayer.cpp.

| CNeuralLogisticLayer | ( | int32_t | num_neurons | ) |

Constuctor

| num_neurons | Number of neurons in this layer |

Definition at line 44 of file NeuralLogisticLayer.cpp.

|

virtual |

Definition at line 61 of file NeuralLogisticLayer.h.

|

inherited |

Builds a dictionary of all parameters in SGObject as well of those of SGObjects that are parameters of this object. Dictionary maps parameters to the objects that own them.

| dict | dictionary of parameters to be built. |

Definition at line 597 of file SGObject.cpp.

|

virtualinherited |

Creates a clone of the current object. This is done via recursively traversing all parameters, which corresponds to a deep copy. Calling equals on the cloned object always returns true although none of the memory of both objects overlaps.

Definition at line 714 of file SGObject.cpp.

|

virtual |

Computes the activations of the neurons in this layer, results should be stored in m_activations. To be used only with non-input layers

| parameters | Vector of size get_num_parameters(), contains the parameters of the layer |

| layers | Array of layers that form the network that this layer is being used with |

Reimplemented from CNeuralLinearLayer.

Definition at line 49 of file NeuralLogisticLayer.cpp.

Computes the activations of the neurons in this layer, results should be stored in m_activations. To be used only with input layers

| inputs | activations of the neurons in the previous layer, matrix of size previous_layer_num_neurons * batch_size |

Reimplemented in CNeuralInputLayer.

Definition at line 153 of file NeuralLayer.h.

Computes

\[ \frac{\lambda}{N} \sum_{k=0}^{N-1} \left \| J(x_k) \right \|^2_F \]

where \( \left \| J(x_k)) \right \|^2_F \) is the Frobenius norm of the Jacobian of the activations of the hidden layer with respect to its inputs, \( N \) is the batch size, and \( \lambda \) is the contraction coefficient.

Should be implemented by layers that support being used as a hidden layer in a contractive autoencoder.

| parameters | Vector of size get_num_parameters(), contains the parameters of the layer |

Reimplemented from CNeuralLinearLayer.

Definition at line 60 of file NeuralLogisticLayer.cpp.

|

virtual |

Adds the gradients of

\[ \frac{\lambda}{N} \sum_{k=0}^{N-1} \left \| J(x_k) \right \|^2_F \]

to the gradients vector, where \( \left \| J(x_k)) \right \|^2_F \) is the Frobenius norm of the Jacobian of the activations of the hidden layer with respect to its inputs, \( N \) is the batch size, and \( \lambda \) is the contraction coefficient.

Should be implemented by layers that support being used as a hidden layer in a contractive autoencoder.

| parameters | Vector of size get_num_parameters(), contains the parameters of the layer |

| gradients | Vector of size get_num_parameters(). Gradients of the contraction term will be added to it |

Reimplemented from CNeuralLinearLayer.

Definition at line 85 of file NeuralLogisticLayer.cpp.

Computes the error between the layer's current activations and the given target activations. Should only be used with output layers

| targets | desired values for the layer's activations, matrix of size num_neurons*batch_size |

Reimplemented from CNeuralLayer.

Reimplemented in CNeuralSoftmaxLayer.

Definition at line 260 of file NeuralLinearLayer.cpp.

|

virtualinherited |

Computes the gradients that are relevent to this layer:

The gradients of the error with respect to the layer's parameters -The gradients of the error with respect to the layer's inputs

Input gradients for layer i that connects into this layer as input are added to m_layers.element(i).get_activation_gradients()

Deriving classes should make sure to account for dropout [Hinton, 2012] during gradient computations

| parameters | Vector of size get_num_parameters(), contains the parameters of the layer |

| targets | a matrix of size num_neurons*batch_size. If the layer is being used as an output layer, targets is the desired values for the layer's activations, otherwise it's an empty matrix |

| layers | Array of layers that form the network that this layer is being used with |

| parameter_gradients | Vector of size get_num_parameters(). To be filled with gradients of the error with respect to each parameter of the layer |

Reimplemented from CNeuralLayer.

Definition at line 135 of file NeuralLinearLayer.cpp.

Computes the gradients of the error with respect to this layer's pre-activations. Results are stored in m_local_gradients.

This is used by compute_gradients() and can be overriden to implement layers with different activation functions

| targets | a matrix of size num_neurons*batch_size. If the layer is being used as an output layer, targets is the desired values for the layer's activations, otherwise it's an empty matrix |

Reimplemented from CNeuralLinearLayer.

Definition at line 114 of file NeuralLogisticLayer.cpp.

|

virtualinherited |

A deep copy. All the instance variables will also be copied.

Definition at line 198 of file SGObject.cpp.

|

virtualinherited |

Applies dropout [Hinton, 2012] to the activations of the layer

If is_training is true, fills m_dropout_mask with random values (according to dropout_prop) and multiplies it into the activations, otherwise, multiplies the activations by (1-dropout_prop) to compensate for using dropout during training

Definition at line 90 of file NeuralLayer.cpp.

Constrains the weights of each neuron in the layer to have an L2 norm of at most max_norm

| parameters | pointer to the layer's parameters, array of size get_num_parameters() |

| max_norm | maximum allowable norm for a neuron's weights |

Reimplemented from CNeuralLayer.

Definition at line 271 of file NeuralLinearLayer.cpp.

Recursively compares the current SGObject to another one. Compares all registered numerical parameters, recursion upon complex (SGObject) parameters. Does not compare pointers!

May be overwritten but please do with care! Should not be necessary in most cases.

| other | object to compare with |

| accuracy | accuracy to use for comparison (optional) |

| tolerant | allows linient check on float equality (within accuracy) |

Definition at line 618 of file SGObject.cpp.

Gets the layer's activation gradients, a matrix of size num_neurons * batch_size

Definition at line 294 of file NeuralLayer.h.

Gets the layer's activations, a matrix of size num_neurons * batch_size

Definition at line 287 of file NeuralLayer.h.

|

inherited |

|

inherited |

|

inherited |

|

virtualinherited |

Returns the height assuming that the layer's activations are interpreted as images (i.e for convolutional nets)

Definition at line 265 of file NeuralLayer.h.

|

virtualinherited |

Gets the indices of the layers that are connected to this layer as input

Definition at line 313 of file NeuralLayer.h.

Gets the layer's local gradients, a matrix of size num_neurons * batch_size

Definition at line 304 of file NeuralLayer.h.

|

inherited |

Definition at line 498 of file SGObject.cpp.

|

inherited |

Returns description of a given parameter string, if it exists. SG_ERROR otherwise

| param_name | name of the parameter |

Definition at line 522 of file SGObject.cpp.

|

inherited |

Returns index of model selection parameter with provided index

| param_name | name of model selection parameter |

Definition at line 535 of file SGObject.cpp.

|

virtual |

Returns the name of the SGSerializable instance. It MUST BE the CLASS NAME without the prefixed `C'.

Reimplemented from CNeuralLinearLayer.

Definition at line 120 of file NeuralLogisticLayer.h.

|

virtualinherited |

Gets the number of neurons in the layer

Definition at line 251 of file NeuralLayer.h.

|

virtualinherited |

Gets the number of parameters used in this layer

Definition at line 281 of file NeuralLayer.h.

|

virtualinherited |

Returns the width assuming that the layer's activations are interpreted as images (i.e for convolutional nets)

Definition at line 258 of file NeuralLayer.h.

|

virtualinherited |

Initializes the layer, computes the number of parameters needed for the layer

| layers | Array of layers that form the network that this layer is being used with |

| input_indices | Indices of the layers that are connected to this layer as input |

Reimplemented from CNeuralLayer.

Definition at line 53 of file NeuralLinearLayer.cpp.

|

virtualinherited |

Initializes the layer's parameters. The layer should fill the given arrays with the initial value for its parameters

| parameters | Vector of size get_num_parameters() |

| parameter_regularizable | Vector of size get_num_parameters(). This controls which of the layer's parameter are subject to regularization, i.e to turn off regularization for parameter i, set parameter_regularizable[i] = false. This is usally used to turn off regularization for bias parameters. |

| sigma | standard deviation of the gaussian used to random the parameters |

Reimplemented from CNeuralLayer.

Definition at line 63 of file NeuralLinearLayer.cpp.

|

virtualinherited |

If the SGSerializable is a class template then TRUE will be returned and GENERIC is set to the type of the generic.

| generic | set to the type of the generic if returning TRUE |

Definition at line 296 of file SGObject.cpp.

|

virtualinherited |

returns true if the layer is an input layer. Input layers are the root layers of a network, that is, they don't receive signals from other layers, they receive signals from the inputs features to the network.

Local and activation gradients are not computed for input layers

Reimplemented in CNeuralInputLayer.

Definition at line 127 of file NeuralLayer.h.

|

virtualinherited |

Load this object from file. If it will fail (returning FALSE) then this object will contain inconsistent data and should not be used!

| file | where to load from |

| prefix | prefix for members |

Definition at line 369 of file SGObject.cpp.

|

protectedvirtualinherited | |||||||||||||

Can (optionally) be overridden to post-initialize some member variables which are not PARAMETER::ADD'ed. Make sure that at first the overridden method BASE_CLASS::LOAD_SERIALIZABLE_POST is called.

| ShogunException | will be thrown if an error occurs. |

Reimplemented in CKernel, CWeightedDegreePositionStringKernel, CList, CAlphabet, CLinearHMM, CGaussianKernel, CInverseMultiQuadricKernel, CCircularKernel, and CExponentialKernel.

Definition at line 426 of file SGObject.cpp.

|

protectedvirtualinherited | |||||||||||||

Can (optionally) be overridden to pre-initialize some member variables which are not PARAMETER::ADD'ed. Make sure that at first the overridden method BASE_CLASS::LOAD_SERIALIZABLE_PRE is called.

| ShogunException | will be thrown if an error occurs. |

Reimplemented in CDynamicArray< T >, CDynamicArray< float64_t >, CDynamicArray< float32_t >, CDynamicArray< int32_t >, CDynamicArray< char >, CDynamicArray< bool >, and CDynamicObjectArray.

Definition at line 421 of file SGObject.cpp.

|

virtualinherited |

Definition at line 262 of file SGObject.cpp.

|

inherited |

prints all parameter registered for model selection and their type

Definition at line 474 of file SGObject.cpp.

|

virtualinherited |

prints registered parameters out

| prefix | prefix for members |

Definition at line 308 of file SGObject.cpp.

|

virtualinherited |

Save this object to file.

| file | where to save the object; will be closed during returning if PREFIX is an empty string. |

| prefix | prefix for members |

Definition at line 314 of file SGObject.cpp.

|

protectedvirtualinherited | |||||||||||||

Can (optionally) be overridden to post-initialize some member variables which are not PARAMETER::ADD'ed. Make sure that at first the overridden method BASE_CLASS::SAVE_SERIALIZABLE_POST is called.

| ShogunException | will be thrown if an error occurs. |

Reimplemented in CKernel.

Definition at line 436 of file SGObject.cpp.

|

protectedvirtualinherited | |||||||||||||

Can (optionally) be overridden to pre-initialize some member variables which are not PARAMETER::ADD'ed. Make sure that at first the overridden method BASE_CLASS::SAVE_SERIALIZABLE_PRE is called.

| ShogunException | will be thrown if an error occurs. |

Reimplemented in CKernel, CDynamicArray< T >, CDynamicArray< float64_t >, CDynamicArray< float32_t >, CDynamicArray< int32_t >, CDynamicArray< char >, CDynamicArray< bool >, and CDynamicObjectArray.

Definition at line 431 of file SGObject.cpp.

|

virtualinherited |

Sets the batch_size and allocates memory for m_activations and m_input_gradients accordingly. Must be called before forward or backward propagation is performed

| batch_size | number of training/test cases the network is currently working with |

Reimplemented in CNeuralConvolutionalLayer.

Definition at line 75 of file NeuralLayer.cpp.

|

inherited |

Definition at line 41 of file SGObject.cpp.

|

inherited |

Definition at line 46 of file SGObject.cpp.

|

inherited |

Definition at line 51 of file SGObject.cpp.

|

inherited |

Definition at line 56 of file SGObject.cpp.

|

inherited |

Definition at line 61 of file SGObject.cpp.

|

inherited |

Definition at line 66 of file SGObject.cpp.

|

inherited |

Definition at line 71 of file SGObject.cpp.

|

inherited |

Definition at line 76 of file SGObject.cpp.

|

inherited |

Definition at line 81 of file SGObject.cpp.

|

inherited |

Definition at line 86 of file SGObject.cpp.

|

inherited |

Definition at line 91 of file SGObject.cpp.

|

inherited |

Definition at line 96 of file SGObject.cpp.

|

inherited |

Definition at line 101 of file SGObject.cpp.

|

inherited |

Definition at line 106 of file SGObject.cpp.

|

inherited |

Definition at line 111 of file SGObject.cpp.

|

inherited |

set generic type to T

|

inherited |

|

inherited |

set the parallel object

| parallel | parallel object to use |

Definition at line 241 of file SGObject.cpp.

|

inherited |

set the version object

| version | version object to use |

Definition at line 283 of file SGObject.cpp.

|

virtualinherited |

Gets the number of neurons in the layer

| num_neurons | number of neurons in the layer |

Definition at line 271 of file NeuralLayer.h.

|

virtualinherited |

A shallow copy. All the SGObject instance variables will be simply assigned and SG_REF-ed.

Reimplemented in CGaussianKernel.

Definition at line 192 of file SGObject.cpp.

|

inherited |

unset generic type

this has to be called in classes specializing a template class

Definition at line 303 of file SGObject.cpp.

|

virtualinherited |

Updates the hash of current parameter combination

Definition at line 248 of file SGObject.cpp.

|

inherited |

For autoencoders, specifies the position of the layer in the autoencoder, i.e an encoding layer or a decoding layer. Default value is NLAP_NONE

Definition at line 343 of file NeuralLayer.h.

|

inherited |

For hidden layers in a contractive autoencoders [Rifai, 2011] a term:

\[ \frac{\lambda}{N} \sum_{k=0}^{N-1} \left \| J(x_k) \right \|^2_F \]

is added to the error, where \( \left \| J(x_k)) \right \|^2_F \) is the Frobenius norm of the Jacobian of the activations of the hidden layer with respect to its inputs, \( N \) is the batch size, and \( \lambda \) is the contraction coefficient.

Default value is 0.0.

Definition at line 338 of file NeuralLayer.h.

|

inherited |

probabilty of dropping out a neuron in the layer

Definition at line 327 of file NeuralLayer.h.

|

inherited |

io

Definition at line 369 of file SGObject.h.

|

inherited |

Should be true if the layer is currently used during training initial value is false

Definition at line 324 of file NeuralLayer.h.

gradients of the error with respect to the layer's inputs size previous_layer_num_neurons * batch_size

Definition at line 381 of file NeuralLayer.h.

activations of the neurons in this layer size num_neurons * batch_size

Definition at line 376 of file NeuralLayer.h.

|

protectedinherited |

number of training/test cases the network is currently working with

Definition at line 371 of file NeuralLayer.h.

|

protectedinherited |

binary mask that determines whether a neuron will be kept or dropped out during the current iteration of training size num_neurons * batch_size

Definition at line 393 of file NeuralLayer.h.

|

inherited |

parameters wrt which we can compute gradients

Definition at line 384 of file SGObject.h.

|

inherited |

Hash of parameter values

Definition at line 387 of file SGObject.h.

|

protectedinherited |

Width of the image (if the layer's activations are to be interpreted as images. Default value is 1

Definition at line 357 of file NeuralLayer.h.

|

protectedinherited |

Indices of the layers that are connected to this layer as input

Definition at line 363 of file NeuralLayer.h.

|

protectedinherited |

Number of neurons in the layers that are connected to this layer as input

Definition at line 368 of file NeuralLayer.h.

gradients of the error with respect to the layer's pre-activations, this is usually used as a buffer when computing the input gradients size num_neurons * batch_size

Definition at line 387 of file NeuralLayer.h.

|

inherited |

model selection parameters

Definition at line 381 of file SGObject.h.

|

protectedinherited |

Number of neurons in this layer

Definition at line 347 of file NeuralLayer.h.

|

protectedinherited |

Number of neurons in this layer

Definition at line 360 of file NeuralLayer.h.

|

inherited |

parameters

Definition at line 378 of file SGObject.h.

|

protectedinherited |

Width of the image (if the layer's activations are to be interpreted as images. Default value is m_num_neurons

Definition at line 352 of file NeuralLayer.h.

|

inherited |

parallel

Definition at line 372 of file SGObject.h.

|

inherited |

version

Definition at line 375 of file SGObject.h.